When NOT to Use suspend in Kotlin: The Reporting API Anti-Pattern

When you start working with Kotlin coroutines, there’s a temptation to mark everything as suspend. After all, suspend functions compose nicely, the compiler helps you, and it feels like you’re writing “proper async code”. While suspend often appears in async code paths, that doesn’t mean “cheap to call repeatedly” or “automatically non-blocking”. It means “this function can suspend execution” and thus can introduce backpressure. Using it incorrectly creates performance problems that are hard to spot until they hit production.

This article explores a common anti-pattern: using suspend for fire-and-forget APIs where it creates unwanted backpressure and hidden performance costs.

The Problem: Suspend All The Things #

Consider a typical change tracking system. You have file changes happening, and you want to collect them for later processing:

// Anti-pattern: reporting API is suspend

interface FileChangesListener {

suspend fun fileChanged(file: Path)

}

class FileChangeTracker(

private val diffCollector: ChangeCollector

) : FileChangesListener {

override suspend fun fileChanged(file: Path) {

val group = createFileChange(file)

diffCollector.addChanges(group)

}

}

// The collector is also suspend

interface ChangeCollector {

suspend fun addChanges(change: FileChange)

}

class ChangeCollectorImpl : ChangeCollector {

private val _changes = MutableStateFlow<List<FileChange>>(emptyList())

private val _state = MutableStateFlow(ProcessingState.IDLE)

override suspend fun addChanges(change: FileChange) {

_changes.emit(_changes.value + change) // suspend

_state.emit(ProcessingState.PROCESSING) // suspend

invalidateChanges() // suspend - but does heavy work!

}

private suspend fun invalidateChanges() {

// Expensive O(n) work on every call

val allChanges = _changes.value

for (change in allChanges) {

computeDiff(change) // CPU-intensive

updateIndex(change) // more work

notifySubscribers(change) // even more work

}

}

}

At first glance this looks reasonable. Functions are suspend, they compose, everything compiles. But there’s a critical performance bug hidden in the design.

When you call fileChanged(file1), then fileChanged(file2), then fileChanged(file3) (for example, in a loop), here’s what happens:

fileChanged(file1)is called, which callsaddChanges(group1)addChangesemits to the flow (instant) and callsinvalidateChanges()invalidateChangesscans ALL changes and does expensive work for each- Only after this completes, we move to

fileChanged(file2) addChangesruns again,invalidateChangesscans ALL changes again (now including file1)- This repeats for every file

If you add 100 files, you do O(n²) work. Adding one file triggers full analysis of all files. The next file triggers analysis of all files plus the previous one. The third file analyzes all three. The cost grows quadratically.

The worst part is that suspend creates the illusion that this is fine. The function signature says “I might suspend”, which developers interpret as “this won’t block threads, so it’s safe to call many times”. But suspend doesn’t mean “won’t block” or “cheap”. It means the function CAN suspend, but in this case invalidateChanges() does expensive CPU-intensive computation before any actual suspension. You’re still blocking the coroutine, and on single-threaded dispatchers (like Main) you may be blocking a thread too. And because the entire call chain is suspend, every caller up the stack suspends and waits for this expensive computation to finish.

When you read the implementation carefully, the problem becomes obvious. Let’s look at what addChanges() actually does:

- Updates

_changesflow - even if you callemit(),MutableStateFlow.emit()returns instantly, it’s equivalent to assignment. The “proper” flow API is an illusion here. - Updates

_stateflow - same thing, instant return invalidateChanges()- does expensive computation synchronously

The flow emissions don’t need suspend - MutableStateFlow.emit() never actually suspends, so you can use tryEmit() or direct assignment instead. But what about invalidateChanges()? This is where thinking about data flow upfront pays off.

If we’re already using StateFlow to hold changes, we have a reactive stream. Instead of processing on every add, we can:

Process on demand: When someone actually needs the results (via some API), run invalidation then. This should be an explicit call (e.g.,

processChanges()), not hidden insideaddChanges(). The caller who needs the result waits; reporters don’t. Hiding invalidation behindaddChanges()makes the cost invisible to callers and is exactly how the performance bug appears.Process reactively with cancellation: Collect the changes flow in a background coroutine using

collectLatest. When new changes arrive faster than processing completes, the previousinvalidateChanges()gets cancelled - no wasted CPU on stale data:

scope.launch {

_changes.collectLatest { changes ->

invalidateChanges(changes) // cancelled if new changes arrive

}

}

Either approach removes the need for suspend in addChanges(). Reporters just update the flow and return immediately. Processing happens elsewhere, on its own schedule, with proper cancellation support.

The suspend modifier in the original code serves no purpose except to create unwanted backpressure. Every caller waits for expensive processing they don’t care about. The signature says suspend fun addChanges() but should have been fun addChanges() from the start.

Why This Happens: The suspend Temptation #

When you mark a fire-and-forget API as suspend, you give the implementation too much freedom. The developer implementing it sees suspend and thinks “I can call any suspend functions I want”. And they do - without thinking whether those calls are necessary or what their cost is.

The same O(n²) problem can happen in synchronous code, of course. But with suspend, there’s an illusion that it doesn’t matter because “everything is asynchronous anyway”. The developer calls invalidateChanges() on every add, thinking “it’s suspend, so it won’t block anything important”. But the caller still waits. The coroutine still executes sequentially. The quadratic cost is still there.

The suspend keyword creates a false sense of safety. Developers see it and think “this is async, so performance doesn’t matter here”. But async doesn’t mean free. The caller still pays the cost of waiting for whatever the implementation decides to do - even when the caller doesn’t need the result.

There’s another reason suspend makes performance problems harder to spot: profiler snapshots become harder to read.

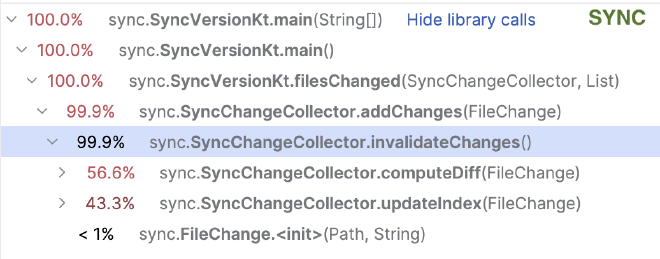

With synchronous code, you take a CPU snapshot and see a clear call tree:

The expensive invalidateChanges() shows up as a hot subtree. You see immediately where the time goes.

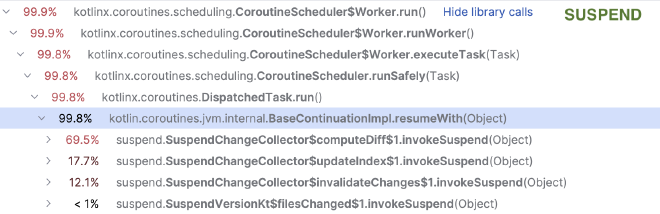

But with suspend, each suspension point splits the function into a separate continuation. If computeDiff() and updateIndex() are suspend functions, the profiler sees them as independent work items scheduled on the dispatcher:

Where’s invalidateChanges? Where’s addChanges? Where’s fileChanged? They don’t appear in the stack at all - each continuation is dispatched independently. You see $computeDiff$1.invokeSuspend and $updateIndex$1.invokeSuspend as separate entries, but the logical connection between them is lost.

Even though these screenshots show simple stack traces, real-world snapshots get much worse: suspend methods often have deep call chains, and the CPU profile gets smeared across many small continuation fragments instead of one obvious hot subtree. Profilers measure real CPU time, not logical coroutine execution - they can’t reassemble the suspend function call tree for you.

The Trade-off: Backpressure vs Memory #

When designing fire-and-forget APIs, you face a fundamental choice: backpressure or buffering.

It’s useful to think of fire-and-forget data flow as a multi-stage waterfall: each stage processes what it gets, then pours the result into the next StateFlow. The boundary is the flow itself — the current stage doesn’t wait for what happens below. Most of the time this is sufficient. When you do need backpressure, the metaphor shifts from a waterfall to plumbing: you have valves, pressure, and explicit control over how fast the next stage is allowed to run.

Backpressure is a flow control mechanism where a slow consumer signals to a fast producer to slow down. With suspend, this happens naturally - the caller waits until the operation completes. If processing is slow, the caller slows down too. This protects you from overwhelming the system, but it slows down the producer (potentially freezing the UI).

Buffering means accepting events without waiting and storing them for later processing. This keeps the producer fast, but if events arrive faster than you can process them, the buffer grows. Without limits, you risk OOM.

A suspend function that returns Unit is still returning something meaningful: completion. The caller waits for that completion. Remove suspend, and you remove that waiting - but now you need another strategy to handle the flood.

When you make a fire-and-forget API non-suspend, you must think about what happens when events arrive faster than processing:

- Bounded buffer with drop: Use a channel with

BufferOverflow.DROP_OLDESTorDROP_LATEST. Events get lost, but memory stays bounded. - Conflation: Use

Channel.CONFLATEDorMutableStateFlow. Only the latest value matters; intermediate values are discarded. - Merging: Combine multiple events into one. Instead of processing each file change separately, batch them into groups.

- Sampling: Process only every Nth event, or one event per time window.

The question isn’t just “should this be suspend?” It’s “what happens under load?”

// Backpressure: caller waits if buffer full

val channel = Channel<FileChange>(capacity = 10)

channel.send(change) // suspends when full

// Drop oldest: never blocks, may lose events

val channel = Channel<FileChange>(10, BufferOverflow.DROP_OLDEST)

channel.trySend(change) // always succeeds, drops old if full

// Conflation: only latest matters

val state = MutableStateFlow<List<FileChange>>(emptyList())

state.update { it + change } // atomic update, never blocks

The right choice depends on your domain. Can you afford to lose events? Can you afford to slow down the producer? Can you merge events without losing information? There’s no universal answer, but you must ask the question.

A Clearer Approach: Separate Reporting from Processing #

Reporting APIs should be regular functions. Processing should happen in batches or with debouncing, and it should run in a separate coroutine (unlike the original approach where processing happens inline in addChanges()):

// Good: reporting API is a regular function

interface FileChangesListener {

fun fileChanged(file: Path)

}

class FileChangeTracker(

private val diffCollector: ChangeCollector

) : FileChangesListener {

override fun fileChanged(file: Path) {

val group = createFileChange(file)

diffCollector.addChanges(group)

}

}

// The collector uses regular functions for adding, processes in batches

interface ChangeCollector {

fun addChanges(change: FileChange)

}

class ChangeCollectorImpl(

private val scope: CoroutineScope

) : ChangeCollector {

private val _changes = MutableStateFlow<List<FileChange>>(emptyList())

private val _state = MutableStateFlow(ProcessingState.IDLE)

init {

// Process changes reactively - cancels previous processing if new changes arrive

scope.launch {

_changes.collectLatest { changes ->

if (changes.isNotEmpty()) {

_state.value = ProcessingState.PROCESSING

invalidateChanges(changes)

_state.value = ProcessingState.IDLE

}

}

}

}

override fun addChanges(change: FileChange) {

_changes.update { it + change } // atomic

}

private suspend fun invalidateChanges(changes: List<FileChange>) {

for (change in changes) {

ensureActive() // check for cancellation

computeDiff(change)

updateIndex(change)

}

}

}

Now when you call fileChanged(file1), then fileChanged(file2), then fileChanged(file3) (for example, in a loop), here’s what happens:

fileChanged(file1)updates the flow,collectLateststartsinvalidateChanges()fileChanged(file2)updates the flow,collectLatestcancels the previous processing and restartsfileChanged(file3)updates the flow, cancels again and restarts- All

addChanges()calls return immediately - no waiting - Only the final

invalidateChanges()runs to completion with all three files

The ensureActive() check inside invalidateChanges() cooperates with cancellation - when new changes arrive, the in-progress loop exits early instead of wasting CPU on stale data.

In heavy burst scenarios, the complexity drops from O(n²) to O(n). Adding 100 files triggers one analysis of 100 files, not 100 analyses.

The key insight is separating the reporting interface from the processing implementation. Callers report changes through a regular function. The implementation batches them and processes asynchronously on its own schedule.

Alternative: Explicit Batch Processing #

If you have a natural batch boundary, you can make it explicit. The caller controls when processing happens and gets the result:

interface ChangeCollector {

fun addChanges(change: FileChange)

suspend fun processBatch(): ProcessingResult

}

// Note: real implementation should be thread-safe, this one only demonstrates the domain logic

class ChangeCollectorImpl : ChangeCollector {

private val pendingChanges = mutableListOf<FileChange>()

override fun addChanges(change: FileChange) {

pendingChanges.add(change)

}

override suspend fun processBatch(): ProcessingResult {

if (pendingChanges.isEmpty()) return ProcessingResult.empty()

val batch = pendingChanges.toList()

pendingChanges.clear()

return analyzeChanges(batch)

}

}

// Usage

suspend fun processFileChanges(files: List<Path>): ProcessingResult {

files.forEach { file ->

collector.addChanges(createFileChange(file))

}

return collector.processBatch() // caller waits and gets result

}

This makes the batch boundary explicit. Adding is fast and non-blocking. Processing is suspend because the caller genuinely needs the result - and that’s a valid reason for suspend.

This approach works when you have clear batch boundaries - user actions, transaction boundaries, or request handling. For continuous streams without obvious batches, debouncing is cleaner.

When to Use suspend #

Use suspend when you need one of these:

You need the result: The caller must wait for the operation to complete because they need the return value. Database queries, network calls, file reads - the caller can’t proceed without the data.

You need backpressure: You want to slow down callers when processing can’t keep up. A bounded channel that suspends on send() when full. A rate-limited API client that suspends when you hit the limit. The suspension is the feature, not a side effect.

You’re waiting for completion: The caller needs to know when an async operation finishes, even if there’s no return value. Waiting for a job to complete, waiting for a transaction to commit, waiting for a file to be written.

You likely don’t need suspend for:

Fire-and-forget operations: Reporting events, logging, sending notifications. The caller doesn’t care when or if processing happens. Use regular functions.

Simple data operations: Adding to a list, emitting to StateFlow, putting in a map. These operations complete immediately. Making them suspend just because you might process the data later is wrong. MutableStateFlow.value = x never suspends. MutableStateFlow.tryEmit(x) never suspends. MutableStateFlow.emit(x) is effectively the same as value = x (state flow is conflated and doesn’t do backpressure), so prefer direct assignment for clarity.

Calling suspend functions without waiting: If you’re just launching a coroutine or updating a MutableStateFlow, you’re not actually suspending. The function returns immediately. Don’t make it suspend. For channels, be careful: trySend() can fail and drop your event if the buffer is full. Either check the result and handle failures (at least log them), or make sure your buffer strategy (CONFLATED, DROP_OLDEST, unlimited) is intentional and you understand the trade-offs.

Conclusion #

Before adding suspend to a function, ask: does the caller need to wait? Does the caller need the result? Do I need backpressure here?

If the answer is no, don’t use suspend. Fire-and-forget APIs should be regular functions. Let the implementation handle async processing without forcing callers to participate.

When you see a codebase where everything is suspend, ask why. Does each function genuinely need to suspend the caller, or is it just “we’re using coroutines and it compiles”?